EASA and AI: Human-Centric with Ethics, without Bias

EASA has demonstrated a proactive approach on paving the way for introducing AI in aviation,” stated Professor Peter Hecker, Chair of the EASA Scientific Committee and VP Research and Early Career Scientists at Technische Universität Braunschweig, Germany. Hecker keynoted the EASA Artificial Intelligence Days – High-Level Conference in July 2024.

The two-day conference was focused on the EASA AI Roadmap v2.0 and the Concept Paper, First usable guidance for Level 1&2 machine learning applications.

Roadmap 2, published in May 2023, and this second Concept Paper, issued in March 2024 (incorporating 900 comments from 34 stakeholders), represent nearly six years of discussions by hundreds of experts from the European Union Aviation Safety Agency (EASA), the aviation industry, academia and other stakeholders.

In October 2018, the Agency established an internal task force on Artificial Intelligence with a view to opportunities and challenges created by the introduction of AI in aviation and actions the Agency should take to meet those challenges.

The task force published EASA AI Roadmap 1.0 in February 2020, followed by the Concept Paper, First usable guidance for Level 1 ML applications, in December 2021.

The EASA effort preceded the European Commission’s formal proposal for an AI regulatory framework (April 2021). The EU Parliament adopted the Artificial Intelligence Act in March 2024, approved by the Council in May that year. The Act will be fully applicable 24 months after entry into force, some parts sooner, but ‘high-risk’ systems – which include aviation, automobiles, medical devices, lifts / elevators, and (curiously) toys – will have more time to comply.

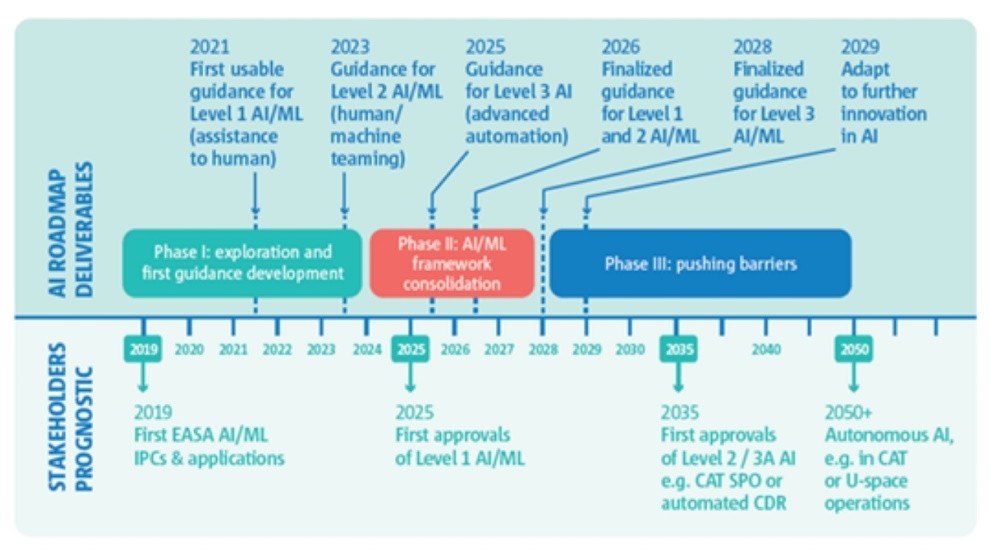

Key future milestones in the EASA Roadmap are:

2025 – First approvals Level 1 AI / ML and Guidance for

Level 3 AI (advanced automation)

2035 – First approvals for Level 2 / 3A AI

2050 – Autonomous AI in CAT or U-space operations

Concept Paper 3, according to Prof. Hecker, is in process, including final guidance development for the Human Factors for AI building block.

EARLY CERTIFICATION PROJECTS

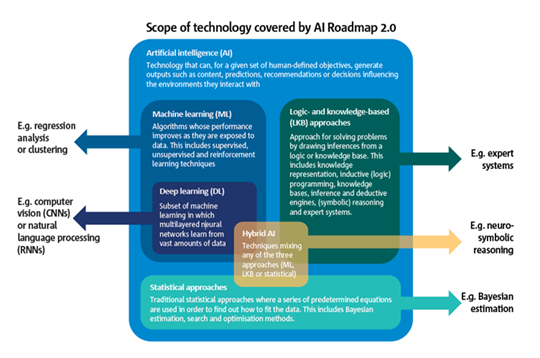

The EU Artificial Intelligence Act and EASA define AI as “technology that can, for a given set of human-defined objectives, generate outputs such as content, predictions, recommendations, or decisions influencing the environments they interact with.”

EASA believes AI will enable “us to create intelligent systems that can provide advanced assistance solutions to human end users, optimise aircraft performance, improve air traffic management and in turn enhance safety in ways that were previously unimaginable.”

The Concept Papers have already led to “approval and deployment of safety-related AI systems for end-user support (pilots, ATCOs, etc.) and… certification projects through special conditions.”

At the High-Level conference, among the use cases highlighted were:

- Conflict detection and conflict resolution between aircraft trajectories

- A TopSky sequencer aimed at minimizing airport approach and ground delays

- Contrail forecasting and mitigation

- Detecting and mitigating pilot incapacitation, particularly for single-pilot operations

- An intelligent assistant in the cockpit to assist in ‘startle’ response

- An automated taxiing system

Computer vision and natural language processing (NLP), the EASA working group suggested, “can increase the capabilities to make sense out of sensor data.” In aviation, this might lead to high-resolution camera-based traffic detection or assistance in ATC communication through speech-to-text capability. “Hybridization of deep learning solutions with logic- and knowledge-based approaches open a promising path to efficient decision-making support, in view of enhanced virtual assistance to the pilot.”

Also, “AI may assist the crew by advising on routine tasks (e.g. flight profile optimization) or providing enhanced advice on aircraft management issues or flight tactical nature, helping the crew to take decisions in particular in high-workload circumstances (e.g. go-around or diversion). AI may also support the crew by anticipating and preventing some critical situations according to the operational context and the crew health situation (e.g. stress, health, etc.).”

“To ensure safe operations, crew training is another essential consideration. The use of AI gives rise to adaptive training solutions, where ML could enhance the effectiveness of training activities by leveraging the large amount of data collected during training and operations.”

Aside from that, there is relatively little reference in the Roadmap – yet – which relates to pilot, maintenance, cabin crew or other aviation professional training.

The most discussed application of AI, noted EASA, is autonomous flight. However, “current available technology does not match the anticipated levels of adaptability, rationality and management of uncertainty that aviation products should be meeting to reach autonomy. Nevertheless, the drone market paves the way towards advanced automation and we can see the emergence of new business models striving for the creation of air taxi systems to respond to the demand for urban air mobility.”

Roadmap 2.0 “is intended to serve as a basis for continued discussion with the Agency stakeholders. It is a living document, which will be amended regularly, augmented, deepened, improved through discussions and exchanges of views, but also, practical work on AI development in which the Agency is already engaged.

DEFINING the SCOPE of AI

The first version of the EASA Roadmap was linked with machine learning (ML) and, in particular, deep learning (DL). However, Roadmap v2.0 stated, “Even if the use of learning solutions remains predominant in the applications and use cases received from the aviation industry, it turns out that meeting the high safety standards brought by current aviation regulations pushes certain applicants towards a renewed set of knowledge-based AI. This is one of the main drivers for EASA to update this document.” It added, “those different AI approaches may be used in combination (also known as hybrid AI).

The key provisions of the EU AI Act include:

- Risk assessment: AI systems will be categorized based on their risk level, and high-risk systems will require a conformity assessment before they can be placed on the market or put into service.

- Ban on unacceptable AI: The regulation prohibits certain AI practices that are considered to be unacceptable and contrary to EU values, such as AI systems that manipulate human behavior, exploit vulnerabilities of specific groups, or use subliminal techniques.

- Transparency and traceability: The regulation requires that AI systems be designed in a way that ensures transparency and traceability, so that users can understand how decisions are made and what data is used to train the system.

- Data governance: The regulation aims to ensure that data used to train AI systems is of high quality and unbiased, and that privacy and data protection rules are respected.

- Human oversight: The regulation requires that AI systems have appropriate human oversight and control, especially in high-risk situations such as in healthcare or public services. (Persons assigned must have the necessary competence, training and authority.)

EASA guidelines place great emphasis on “trustworthiness” of AI, which encompasses data quality and management, documentation and traceability, transparency and information to deployers, human oversight, cybersecurity and robustness.

HUMAN-CENTRIC and ETHICAL

The EU and EASA emphasize “a human-centric approach to AI that respects fundamental rights and values, promotes inclusion and diversity, and supports sustainable and responsible innovation.”

At the High-Level conference, EASA Project Manager for Ethics in AI, Ines Berlenga said, “It is important to observe the possible consequences on the humans impacted by these systems. By applying certain key ethical concepts… we contribute to the prevention of eventual injustices or infringements of human rights.”

Key ethical concepts, which were evaluated in a survey, include:

- Equal opportunities

- Non-discrimination and fairness

- Data protection

- Right for privacy

- Transparency

- Accountability

- Labor protection and professional development

One of the responses, addressing pilot Physiological Data Monitoring, complained, “Health and physiological data is sensitive, similar to… being stripped naked and photographed for statistical or measuring reasons. With such exposure I would feel insecure.”

To the Teaming with AI topic: “It is unacceptable to refer to AI as a teammate. I will not attribute human characteristics to it. It is a data-driven decision system providing an output to my team. I would no more consider it a teammate than I do any other automated warning system currently in place.”

The industry survey was responded to by a demographic of 80% male, 20% female, but predominantly 40 to 59 years old – meaning more than 10 years of professional experience – with 76% working directly with AI-based systems.

A similar survey will be taken of the general public at the end of 2024, beginning of 2025.